MMM : Multi-Track Music Machine

MMM is a generative music generation system based on the Transformer architecture, that is capable of generating multi-track music, developed by Jeff Enns and Philippe Pasquier.

Based on an auto-regressive model, the system is capable of generating music from scratch using a wide range of preset instruments. Inputs from one track can condition the generation of new tracks, resampling MIDI input from the user or the system into further layers of music.

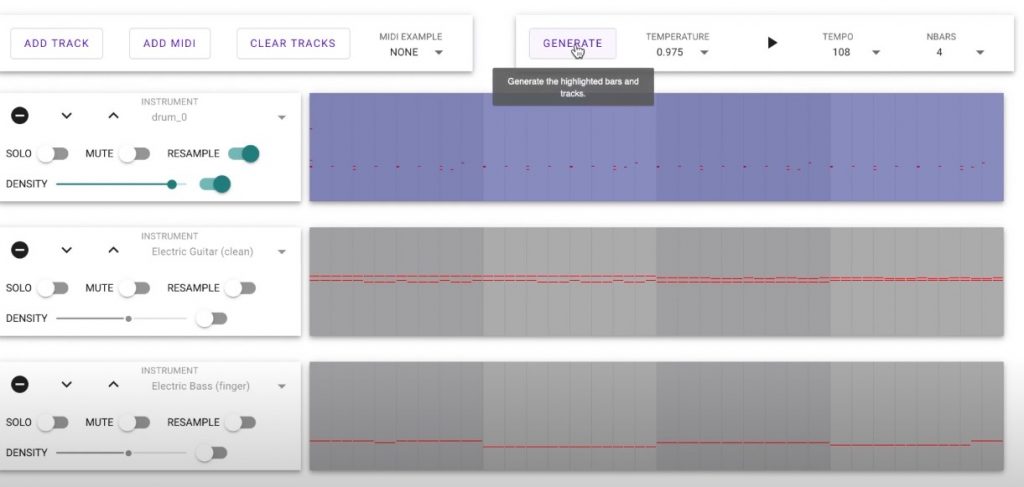

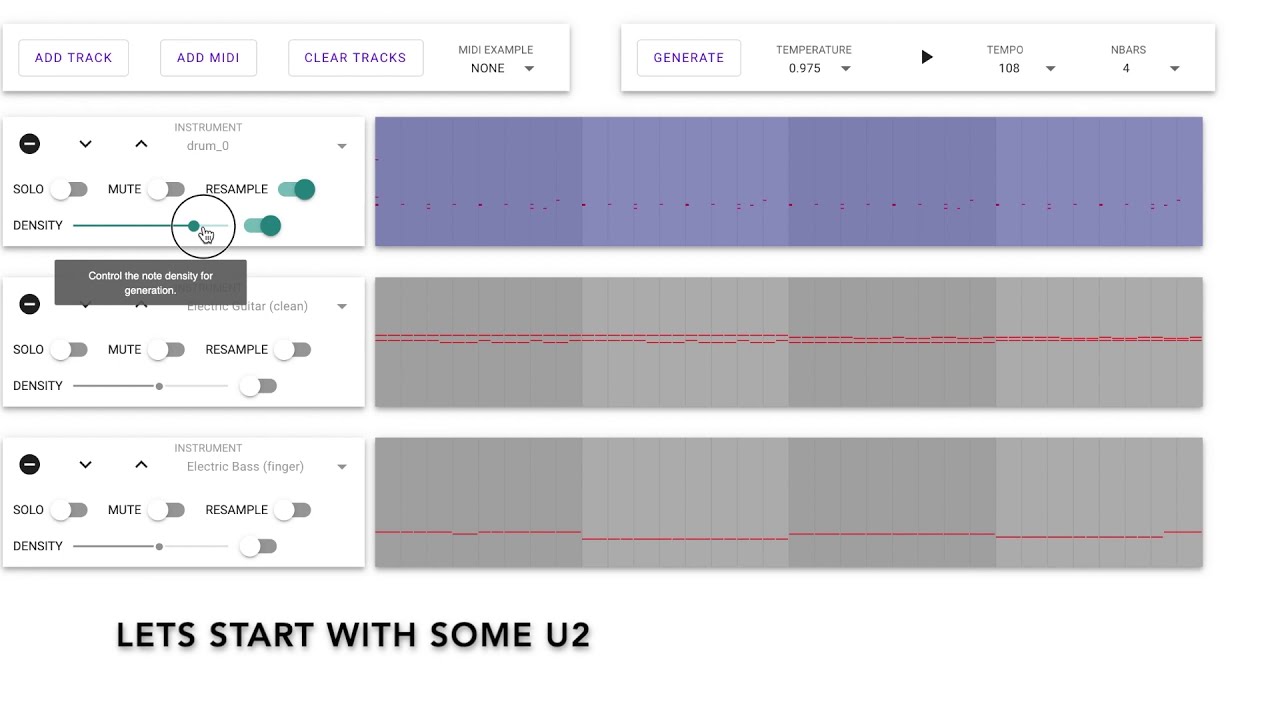

MMM allows for the note density (i.e. the number of note onsets) to be specified for each track, which can generate varying results in resampling. The system also allows for a set of bars to be edited, selected, and resampled, affording the user a fine-degree of control.

To see the system in action, watch the video below, listen to some of the examples, or try the demo yourself.

Abstract

In contrast to previous work, which represents musical material as a single time-ordered sequence, where the musical events corresponding to different tracks are interleaved, we create a time-ordered sequence of musical events for each track and concatenate several tracks into a single sequence. This takes advantage of the attention-mechanism, which can adeptly handle long-term dependencies. We explore how various representations can offer the user a high degree of control at generation time, providing an interactive demo that accommodates track-level and bar-level inpainting, and offers control over track instrumentation and note density.

For more details, you can read the paper.

Research Papers

Ens, Jeff, and Philippe Pasquier. “MMM: Exploring Conditional Multi-Track Music Generation with the Transformer.” arXiv preprint arXiv:2008.06048 (2020).

Demo

#RL